Home » Scripts (Page 2)

Category Archives: Scripts

Scaling Azure VM with Azure Automation, with help of Azure SQL

I am running a number of virtual machines in Microsoft Azure, some of them just a couple of hours and some I keep running 24/7. Often I don’t need them during the night, but some I still want to keep online to make sure database sync jobs runs. Previous I have been using a couple of different Powershell scripts that start and stop virtual machines, but now I have built a new solution, and want to share the idea with you. This new solution involves two components, except the virtual machines, it includes Azure Automation and Azure SQL. Azure Automation (current in preview) is an automation engine that you can buy as a service in Microsoft Azure. If you have been working with Service Management Automation (SMA) you will recognize the feature. You author runbooks in PowerShell workflow and execute them in Microsoft Azure, same way as with SMA, just that you don’t have to handle the SMA infrastructure. Azure SQL is another cool cloud service, a relational database-as-a-service.

Figur 1 Azure Automation

Figur 2 Azure SQL Database

In Azure Automation I have created two runbooks (VM-MorningStart and VM-EveningStop). One that runs every morning and one that runs every evening. These runbooks read configuration from the Azure SQL database about how the virtual machines should be configured during night and day. Azure Automation have a feature, schedule, which can trigger these runbooks once every day, shown in figure 3.

Figur 3 Schedule to invoke runbook

Both runbooks are built the same way. They check in the database for servers and then compare the current settings with the settings in the database. The database has one column for day time server settings and one for Night time server settings. If the current setting is not according to the database the virtual machine is shutdown, re-configured and restarted again. I use Azure AD to connect to the Azure subscription according to this blog post, and I use SQL authentication to logon to the SQL server. If a virtual machine is set to size 0 in the database it seems the machine should be turned of during the Night.

workflow VM-EveningStop

{

### SETUP AZURE CONNECTION

###

$Cred = Get-AutomationPSCredential -Name 'azureautomation@domainnameAD.onmicrosoft.com'

Add-AzureAccount -Credential $cred

### Connect to SQL

InlineScript {

$UserName = "azuresqluser"

$UserPassword = Get-AutomationVariable -Name 'Azure SQL user password'

$Servername = "sqlservername.database.windows.net"

$Database = "GoodNightServers"

$MasterDatabaseConnection = New-Object System.Data.SqlClient.SqlConnection

$MasterDatabaseConnection.ConnectionString = "Server = $ServerName; Database = $Database; User ID = $UserName; Password = $UserPassword;"

$MasterDatabaseConnection.Open();

$MasterDatabaseCommand = New-Object System.Data.SqlClient.SqlCommand

$MasterDatabaseCommand.Connection = $MasterDatabaseConnection

$MasterDatabaseCommand.CommandText = "SELECT * FROM Servers"

$MasterDbResult = $MasterDatabaseCommand.ExecuteReader()

#

if ($MasterDbResult.HasRows)

{

while($MasterDbResult.Read())

{

Select-AzureSubscription -SubscriptionName "Windows Azure MSDN - Visual Studio Ultimate"

$AzureVM = Get-AzureVM | Where-Object {$_.InstanceName -eq $MasterDbResult[1]}

Get-AzureVM -ServiceName $AzureVM.ServiceName | Stop-AzureVM -Force

#

If ($MasterDbResult[2] -eq "0") {

}

Else {

Get-AzureVM -ServiceName $AzureVM.ServiceName | Set-AzureVMSize $MasterDbResult[2] | Update-AzureVM

Get-AzureVM -ServiceName $AzureVM.ServiceName | Start-AzureVM

}

}

}

}

}

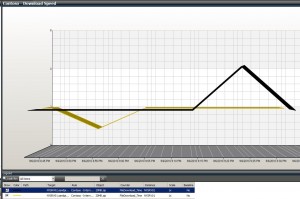

The database is designed according to this figure (click to make it larger)

Summary: Azure automation run one runbook in the morning to start up and scale up virtual machines, and one runbook in the evening to scale down and shut down virtual machines. All Configuration is stored in a Azure SQL database.

Note that this is provided “AS-IS†with no warranties at all. This is not a production ready management pack or solution for your production environment, just a idea and an example.

Copy or rename a SMA runbook

You may have notice that in the Windows Azure portal there is no way to copy or rename a SMA runbook. But it is of course possible with a small Powershell script. The script in this blog post will copy a source SMA runbook and store all settings in a new SMA runbook. The script will also import the new runbook and delete the old one. You can comment (#) the last part if you just want to copy your source runbook and not delete it. If you keep the last part, delete source runbook, then the result of the script will be a rename of the runbook.

$path = "C:\temp"

$targetrunbookname = "newdebugexample"

$sourcerunbookname = "debugexample"

$WebServiceEndpoint = "https://wap01"

##

## Get source runbook

##

$sourcesettings = Get-SmaRunbook -WebServiceEndpoint $WebServiceEndpoint -Name $sourcerunbookname

$source = Get-SmaRunbookDefinition -WebServiceEndpoint $WebServiceEndpoint -name $sourcerunbookname -Type Published

##

## Create the new runbook as a file with source workflow and replace workflow name

##

New-item $path\$targetrunbookname.ps1 -type file

add-content -path $path\$targetrunbookname.ps1 $source.content

$word = "workflow $sourcerunbookname"

$replacement = "workflow $targetrunbookname"

$text = get-content $path\$targetrunbookname.ps1

$newText = $text -replace $word,$replacement

$newText > $path\$targetrunbookname.ps1

##

## Import new runbook to SMA and set runbook configuration

##

Import-SMArunbook -WebServiceEndpoint $WebServiceEndpoint -Path $path\$targetrunbookname.ps1 -Tags $sourcesettings.Tags

Set-SmaRunbookConfiguration -WebServiceEndpoint $WebServiceEndpoint -Name $targetrunbookname -LogDebug $sourcesettings.LogDebug -LogVerbose $sourcesettings.LogVerbose -LogProgress $sourcesettings.LogProgress -Description $sourcesettings.Description

##

## Delete sourcerunbook

##

Remove-SmaRunbook -WebServiceEndpoint $WebServiceEndpoint -name $sourcerunbookname -Confirm:$false

Note that this is provided “AS-IS†with no warranties at all. This is not a production ready solution, just an idea and an example.

Deploy a new service instance with Powershell in VMM 2012

I read on Technet that Microsoft recommend to use service templates in VMM 2012 even for a single server. Instead of using a VM template we should use a service template to deploy a new virtual machine. In Orchestrator there is an activity in the Virtual Machine Manager (VMM) integration pack that can create a new virtual machine from a template. But there is no activity to create a new instance from a service template. So I copy/paste “wrote” a script that deploys a new instance of a service, based on a service template. This is a very basic and simple example script that you can use as a foundation. The script will ask for five input parameters

- CloudName = Target cloud in VMM for the new service

- SvcName = Name of the new service. As I only deploy a single server I use the computer name as service name too

- ComputerANDvmName = The name that will be used both for the virtual machine and the computer name

- SvcTemplateName = The service template to use

- Description = A description that will be added to the new service instance

Param(

[parameter(Mandatory=$true)]

$CloudName,

[parameter(Mandatory=$true)]

$SvcName,

[parameter(Mandatory=$true)]

$ComputerANDvmName,

[parameter(Mandatory=$true)]

$SvcTemplateName,

[parameter(Mandatory=$true)]

$Description)

Import-Module ‘C:\Program Files\Microsoft System Center 2012\Virtual Machine Manager\bin\psModules\virtualmachinemanager\virtualmachinemanager.psd1’$cloud = Get-SCCloud -Name $CloudName

$SvcTemplate = get-scServicetemplate -Name $SvcTemplateName

$SvcConfig = New-SCServiceConfiguration -ServiceTemplate $SvcTemplate -Name $SvcName -Cloud $cloud -Description $Description

$WinSrvtierConfig = Get-SCComputerTierConfiguration -ServiceConfiguration $SvcConfig | where { $_.name -like “Windows*” }

$vmConfig = Get-SCVMConfiguration -ComputerTierConfiguration $WinSrvtierConfig

Set-SCVMConfiguration -VMConfiguration $VMConfig -name $ComputerANDvmName -computername $ComputerANDvmName

Update-SCserviceConfiguration -ServiceConfiguration $Svcconfig

$newSvcInstance = New-SCService -ServiceConfiguration $Svcconfig

The script first create and configure a service deployment configuration. The service deployment configuration is an object that is stored in the VMM Library that describes the new service instance, but it is not running. The last two lines in the script will pick up that service deployment configuration and deploy it to. All settings of the new service instance is stored in the service deployment configuration. In my service template, named “Contoso Small”, I have a tier named “Windows Server 2008R2 Enterprise – Machine Tier 1” that is why the script search for a tier with a name like “Windows*”.

When we have a PowerShell script we can easy use it from a runbook in Orchestrator to deploy new instances of services.

Note that the script is provided “AS-IS†with no warranties.

Maintenance Mode Report (part II)

In the Notification and reporting for maintenance mode post we created a report for every object that is in maintenance mode. I did a update to that script today, instead of showing all objects that are in maintenance mode the report now only show computer objects. You can download the script MMReport.txt (rename to .ps1). As you can see on the last two lines in the script, the script is stopping it self. These lines are needed it you want to run the script from Orchestrator and the “Run Program” activity, else the activity will not finish and move on in the runbook.

$objCurrentPSProcess = [System.Diagnostics.Process]::GetCurrentProcess();

Stop-Process -Id $objCurrentPSProcess.ID;

If you want to run this in Orchestrator, for example every 15 minutes to generate a update maintenance mode report, you can use a “Monitor Date/Time” activity and then a “Run Program” activity. You can configure the “Run Program” activity with the following settings

- Â Program execution

- Computer: FIELD-SCO01 (name of a suitable server with Operations Manager shell installed)

- Program path: powershell.exe

- Parameters: -command C:\scripts\MMreport.ps1

- Working folder: (no value)

Remember that your Orchestrator runbook server service account needs permissions in Operations Manager to get the info. With this sample script the output file will be C:\temp\MMreport.htm. Thanks to Stefan Stranger for PowerShell ideas.

Please note that this is provided “as is†with no warranties at all.

Monitor the internet connection as a client

“Hello, is it IT support? My Internet is slow”

Have you heard that before? Last week a customer asked me if we could monitor how long it takes to download a file from a client workstation, and present it in a nice performance view in the Operations Manager console. Of course we can, with a script in a rule. The script downloads a file from Internet, measure the time it takes and reports it back to Operations Manager as performance data. You can control which machines that runs the script, so you cant select some workstations or machines in remote offices. If it takes more then 3 seconds it will generate a local event. If there is a problem downloading the file it will also generate a local event. You can configure Operations Manager to pickup this event and generate an alert. Thanks to Patrik the script can detect if the file we are trying to download is missing or if there are any other download related problems. I have attached the script I used, and below is the steps that you need to take to implement it.

To use this script, follow these steps

- In the Operations Manager console, navigate to the Authoring workspace, right-click Rules and select Create New Rule

- In the Create Rule Wizard, select to create a Probe Based/Script (Performance) rule, select a suitable management pack for example Contoso – Internet Download

- On the Rule Name and Description page, input a rule name for example “Contoso – Internet Download – Download 20 Mb file”. Select a suitable target for example Windows Server. Uncheck the “Rule is enabled” box.

- On the Schedule page, input how often you want to script to run. For example every 10 minutes

- On the Script page, clear the script text box and paste the script from the attached file. Configure the timeout to 5 minutes. Change the script name to ContosoDownloadscript.vbs

- On the Performance Mapper page, input

- Object: 20MB.zip

- Counter: FileDownload_Time

- Instance: select Netbios computer name from the menu

- Value: $Data/Property[@Name=’Contoso_PerfValue’]$

- Create the rule

Now you have a rule target to all Windows Servers. But it is disabled. To control which machine that is running this, create a new group, save it in the same management pack (important!) and add some windows servers as explicit members. These machines will run the script and download the file.

- In the Operations Manager console, navigate to the Authoring workspace, right-click Groups and select Create a new Group

- In the Create Group Wizard, input a group name, for example “Contoso – Internet Download nodes”. Select the same management pack as you used before, in my case Contoso – Internet Download

- On the Explicit Member page, click Add/Remove Objects

- In the search for drop down menu, select Windows Server. Then in the name field input the machine or machines that you want to use as watcher node and add then under selected objects

- Click next a couple of times in the wizard and then save and close it

To enable the rule against the machines in this group,

- Find the rule again under Authoring and rules

- Right-click it and select Overrides, Override the Rule, For a group, select your group

- In the Override properties window, select enable and configure the override value to TRUE. Click OK to save.

The next step will be to configure a rule to pickup the event. These are the two events that you want to generate alert on

- Navigate to Authoring workspace, right-click Rules, select Create a new rule…

- In the Create Rule Wizard, select to create a Alert Generating Rule, Event Based, NT Event Log (Alert). Select the same MP again, in my case Contoso – Internet Download

- On the General page, input a name, for example Contoso – Internet Download – Event rule. Select “Windows Server” as rule target. Uncheck the “Rule is enabled” check box

- On the Event Log Type page, select Application

- On the Build Event Expressen, input

- Event ID Equals 2

- Event Source Equals WSH

- On the Configure Alerts page, click Alert suppression…

- In the Alert Suppression window, select Event ID, Logging Computer, Event Source, click OK

- On the Configure Alerts page, click Create

Now we need to enable this for the machines that we use as watcher nodes. As we created this rule with “Rule is enabled” unchecked, no machines is running it right now.

- Find the rule again under Authoring and rules

- Right-click it and select Overrides, Override the Rule, For a group, select your group

- In the Override properties window, select enable and configure the override value to TRUE. Click OK to save.

The last step could be to create the performance view

- In the console, navigate to the Monitoring workspace

- Select the folder for your MP, in my case “Contoso – Internet download”. Right-click it and select New > Performance View

- In the Properties window input

- Name: Contoso -Â Download speed

- Show data contained in a specific group: Select your group, in my case “Contoso – Internet download nodes”

- Object: 20MB.zip

- Counter: FileDownload_Time

- Click Ok to save you diagram view

If you want to change the file that we download, or the destination folder, you need to modify the script and the lines highlighted below

If you want to change the default 5 seconds threshold on the download, you need to modify the following line

Download the script here, Internetdownload

Check Last Line Only

I wrote a script to check only the last line of a file. The scripts checks the last line every time it run. If you search my blog you will find a number of script to read logfiles. Create a new two state script monitor where you include the script below. In my example script I looks in the C:\temp\myfile.txt file for the word “Warning” ( varWarPos = Instr(strLine, “Warning”) )

- Unhealthy Expression

- Property[@Name=’Status’] Contains warning

- Healthy Expression

- Property[@Name=’Status’] Contains ok

- Alert description

- You could write any alert description here, but if you include the following parameters you will see the whole line and the status in the alert description.

- State $Data/Context/Property[@Name=’Status’]

- Line $Data/Context/Property[@Name=’Line’]$

Update resolution state with a script

This is a script you can use to update resolution state. It will look at all new alerts (resolutionstate = 0) and see if the alert description contains “domain” or “AD”, if they do, the script will set a new resolution state. The ID for the resolution state can be found under alert settings in the administration workspace, in the Operations Manager console. You can schedule task to run this script every X minute to update all new alerts.

$RMS = “myRMSHost”

Add-PSSnapin “Microsoft.EnterpriseManagement.OperationsManager.Client”

Set-Location “OperationsManagerMonitoring::”

New-ManagementGroupConnection -ConnectionString:$RMS

Set-Location $RMS$resState =Â 42Â

$alerts = get-alert -criteria ‘ResolutionState =”0″‘ | where-object {($_.Description -match “AD”) -or ($_.Description -match “domain”)}

If ($alerts) Â {

foreach ($alert in $alerts)

{

$alert.ResolutionState = $resState

$alert.Update(“”)

}

}Â

The schedule task command can be

C:\WINDOWS\system32\windowspowershell\v1.0\powershell.exe -command C:\myscripts\change_resolutionstate.ps1

How-to monitor file size

This script can be used with a monitor to monitor size of a file. In this example it monitor file C:\temp\myfile.txt and will generate an alert if that file is bigger then 50Mb (52428800 bytes). Settings for your two state script monitor can be

- General

- Name: Contoso – check file monitor

- Monitor Target: choose a suitable class

- Management Pack: choose a suitable MP

- Schedule

- Run every 15 Minutes

- Script

- File Name: contosocheckfilesize.vbs

- Script: see below

- Unhealthy Expression

- Property[@Name=’Status’] does not contain Ok

- Healthy Expression

- Property[@Name=’Status’] contain Ok

- Health

- Healthy Healthy Healthy

- Unhealthy Unhealthy Warning

- Alerting

- check Generate alerts fort this monitor

- check Automatically resolve the alert when the monitor returns to a healthy state

- Alert Name: Contoso – File Size monitor

- Alert Description: Warning. C:\temp\myfile.txt is to big. The file is $Data/Context/Property[@Name=’Size’]$ bytes.

Set objFSO = CreateObject(“Scripting.FileSystemObject”)

Set objFile = objFSO.GetFile(“C:\temp\myfile.txt”)

varSize = objFile.SizeDim oAPI, oBag

If varSize > 52428800 Then

Set oAPI = CreateObject(“MOM.ScriptAPI”)

Set oBag = oAPI.CreatePropertyBag()

Call oBag.AddValue(“Status”,”Bad”)

Call oBag.AddValue(“Size”, varSize)

Call oAPI.Return(oBag)

Else

Set oAPI = CreateObject(“MOM.ScriptAPI”)

Set oBag = oAPI.CreatePropertyBag()

Call oBag.AddValue(“Status”,”Ok”)

Call oAPI.Return(oBag)

End If

Investigate most common alert

The following SQL queries can be used to first list which machine or path and then rules or monitors that generate most alerts in your environment. The first query will show you which computer or path that generate most alerts. The second query will show you which rule or monitor that generate most alerts on one singel machine or path. Run both queries against your data warehouse database (OperationsManagerDW).

Â

SELECT

vManagedEntity.Path, COUNT(1) AS pathcountFROM Alert.vAlertDetail INNER JOIN

Alert.vAlert ON Alert.vAlertDetail.AlertGuid = Alert.vAlert.AlertGuid INNER JOIN

vManagedEntity ON Alert.vAlert.ManagedEntityRowId =

vManagedEntity.ManagedEntityRowId

GROUP BY vManagedEntity.Path

ORDER BY pathcount DESCÂ Â Â

SELECT

Alert.vAlert.AlertName,

Alert.vAlert.AlertDescription,

vManagedEntity.Path, COUNT(1) AS alertcount

FROM Alert.vAlertDetail INNER JOIN

Alert.vAlert ON Alert.vAlertDetail.AlertGuid = Alert.vAlert.AlertGuid INNER JOIN

vManagedEntity ON Alert.vAlert.ManagedEntityRowId =

vManagedEntity.ManagedEntityRowIdWHERE Path = 'opsmgr29.hq.contoso.local'

GROUP BY Alert.vAlert.AlertName, Alert.vAlert.AlertDescription, vManagedEntity.Path

ORDER BY alertcount DESCYou could also use these queries in a report, take a look at this post about author custom reports.

Reading a logfile with a 3 state monitor

If you build a monitor to monitor a logfile, Operations Manager will remember which line it was reading last. Operations Manager will only look for new keyword below that line, it will not read the whole file again. I did a lot of tests with logfile monitoring, read more about them here. If you need to get Operations Manager to read the whole logfile each time, you can use a scrip like this:

Â

Const ForReading = 1

Set oAPI = CreateObject(“MOM.ScriptAPI”)

Set oBag = oAPI.CreatePropertyBag()Set objFSO = CreateObject(“Scripting.FileSystemObject”)

Set objTextFile = objFSO.OpenTextFile _

(“c:\temp\file.txt”, ForReading)Do Until objTextFile.AtEndOfStream

strText = objTextFile.ReadLinevarWarPos = Instr(strText, “Warning”)

If varWarPos > 0 Then

varStatus = “Warning”

varLine = strText

End IfvarCriPos = Instr(strText, “Critical”)

If varCriPos > 0 Then

Call oBag.AddValue(“Line”, strText)

Call oBag.AddValue(“Status”,”critical”)

Call oAPI.Return(oBag)

Wscript.Quit(0)

End IfLoop

objTextFile.CloseIf varStatus = “Warning” Then

Call oBag.AddValue(“Line”, varLine)

Call oBag.AddValue(“Status”,”warning”)

Call oAPI.Return(oBag)

Wscript.Quit(0)

Else

Call oBag.AddValue(“Status”,”ok”)

Call oAPI.Return(oBag)

End If

This script will read the file (c:\temp\file.txt) line by line. The script is looking for two keywords in the logfile, “Warning” and “Critical”. If there is a “Critical” in a line the script will send back a bag with status=Critical and the script will stop. If there is a “Warning” in the line the script will continue, as there might be a “critical” somewhere too. If there was only “Warning” the script will send back status=Warning. If there was no “Warning” or “Critical” the script will send back status=ok.

If there is a “Warning” or “Critical” the script will also put that line into a bag, and send it back to Operations Manager. You will see this line in the alert description. To use this script, you can configure a monitor like this:

- Create a new monitor of type Scripting/Generic/Timed Script Three State Monitor. Input a suitable name and target. More about targeting here.

- Schedule

- Configure your script to run every X minute. The script will rad the whole logfile each time

- Script

- Filename and Timeout, for example CheckFile.vbs and 2 minutes

- Paste the script in the script field

- Unhealthy expression

- Property[@Name=’Status’]

- Equals

- Warning

- Degraded expression

- Property[@Name=’Status’]

- Equals

- Critical

- Healthy expression

- Property[@Name=’Status’]

- Equals

- ok

- Alerting

- Check Generate alerts for this monitor

- Generate an alert when: The monitor is in a critical or warning health state

- Check Automatically resolve the alert when the monitor returns to a healthy state

- Alert name: Input an alert name

- Alert Description

- State $Data/Context/Property[@Name=’Status’]$

- Line $Data/Context/Property[@Name=’Line’]$

Summary: This monitor, including the script, will read a logfile and generate alerts based on keywords. In will read the whole logfile each time and look for two different keywords.

Opalis lab in the hammock

Opalis lab in the hammock

Recent Comments